Just design adaptable control and learning frameworks so your robot generalizes across tasks, combining modular hardware, meta-learning algorithms, and online adaptation to update policies on the fly.

Cognitive Architectures for Adaptive Control

Architectures integrate perception, memory, and planning so you can reconfigure behavior across tasks with minimal retraining and maintain consistent performance.

Neural Network Foundations for Task Versatility

Networks combine recurrent modules and attention mechanisms so you can generalize primitives to new objectives from few demonstrations and adapt internal representations online.

Hierarchical Decision-Making Frameworks

Hierarchy organizes choices across time scales so you can select high-level goals while lower-level controllers handle execution and error correction.

You can implement hierarchical controllers with options, meta-policies, and subgoal generators that shorten planning horizons and improve sample efficiency; combining model-based predictions at higher levels with learned reflexes below reduces training time and helps you transfer strategies between related tasks.

Machine Learning Paradigms for Generalization

Models trained with generalization-focused objectives help you adapt controllers across tasks, combining representation learning and task-conditioned policies to reduce fine-tuning needs.

Reinforcement Learning in Dynamic Environments

Reinforcement learning lets you update policies from trial feedback, supporting online adaptation in nonstationary environments while balancing exploration, exploitation, and safety constraints.

Meta-Learning and Few-Shot Skill Acquisition

Meta-learning trains you to extract transferable priors so you can learn new skills from few demonstrations and adapt policies in a handful of gradient steps.

You can adopt metric-based techniques, like prototypical networks, to match new demonstrations against learned skill embeddings for immediate action selection. Model-agnostic methods such as MAML let you fine-tune policies with a few gradient steps, shortening adaptation time and improving sample efficiency. Meta-reinforcement approaches teach task inference and online context conditioning so your robot adjusts behavior from sparse reward signals. Combining latent variable models, imitation priors, and targeted real-world adaptation helps you minimize transfer gaps and deploy flexible agents faster.

Multi-Modal Sensing and Environmental Perception

Sensors combine cameras, lidar, microphones, and tactile arrays so you perceive complex scenes, detect changes, and adjust task strategies in real time using fused signals and context-aware filtering.

Integrating Computer Vision and Haptic Feedback

Vision and haptic fusion let you refine grasps, infer material properties, and correct visual uncertainty by combining tactile cues with predictive visual models.

Real-Time Mapping and Spatial Awareness

Mapping pipelines keep your world model updated, enabling path planning, obstacle avoidance, and dynamic task allocation from sensor streams.

You rely on SLAM variants, occupancy grids, and sparse voxel maps to maintain consistent spatial representations while sensors stream noisy measurements. Probabilistic filters and loop-closure checks preserve global coherence as you prioritize low-latency local updates for immediate manipulation and higher-rate global updates for long-term planning. Semantic labeling and dynamic object tracking let you adapt to moving obstacles and update goal states without halting operations. Practical systems balance update frequency, memory footprint, and communication so you can run mapping on-board or split processing across edge and cloud.

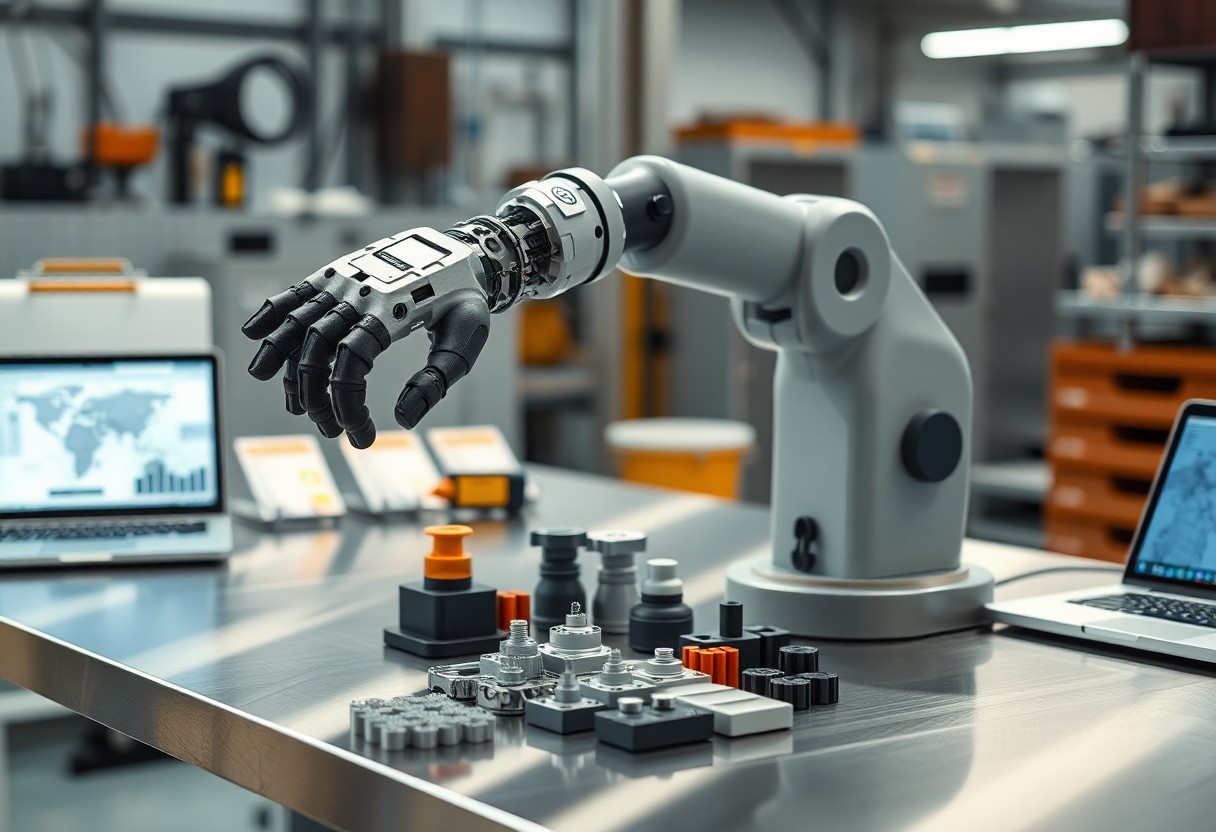

Modular Hardware and Reconfigurable Design

Modularity lets you reconfigure hardware quickly by swapping sensors, limbs, power modules, and compute units so robots can take on new tasks without full redesigns; standardized mounts and pinouts reduce integration time and allow parallel development of task-specific subsystems.

Actuation Systems for Varied Physical Demands

Actuators with adjustable compliance and scalable torque let you match force, speed, and precision to different task profiles while conserving energy and improving interaction safety; modular drive units simplify maintenance and upgrades.

Interchangeable End-Effectors and Tool Use

End-effectors you can swap quickly expand capabilities through interchangeable grippers, cutters, and sensors; self-identifying tools and standardized electrical and mechanical interfaces reduce calibration and speed deployment of new functions.

Designing end-effectors for field flexibility requires standard mechanical interfaces (kinematic locators or latches), unified electrical/pneumatic connections, and tool-ID chips so your controller auto-detects and loads appropriate drivers and calibration. You should include onboard sensing (force/torque, tactile) and clear safety interlocks, plus modular firmware and documented URDF models so new tools integrate predictably into motion planners and verification tests.

Transfer Learning and Knowledge Retention

You reuse pretrained models and compressed task representations so your robot adapts faster to new tasks while preserving prior skills, combining fine-tuning with selective freezing and replay buffers to maintain performance across previously learned behaviors.

Cross-Domain Skill Migration

Transferring grasping policies from industrial to home settings, you fine-tune perception layers and adapt control priors so core skills generalize across different sensors and object types.

Mitigating Catastrophic Forgetting in Lifelong Learning

Protecting existing capabilities, you employ rehearsal, regularization, and sparse updates so new learning doesn’t overwrite previously acquired skills, keeping long-term competence across evolving task sequences.

Strategies include rehearsal with an episodic memory you sample during updates, regularization methods like elastic weight consolidation to slow changes on important parameters, and modular architectures that isolate new learning. You can also use generative replay to synthesize past experiences when storage is limited, apply sparse update schedules to shield critical connections, and run continual validation to detect performance drift and allocate capacity as tasks accumulate.

Safety and Reliability in Unstructured Workspaces

Safety in unstructured workspaces demands adaptive sensing, fail-safes, and continuous validation so you can trust robots around people; see advances in adaptation via AI now enables robots to adapt rapidly to changing real world conditions.

Predictive Error Correction and Self-Diagnostics

Predictive models detect anomalies early so you can schedule maintenance and prevent downtime before failures cascade.

Human-Robot Interaction and Collaborative Safety

Interaction design and intent recognition let you work side-by-side with robots while safety zones and force limits protect you.

You benefit from multimodal intent inference, explicit pause gestures, and shared-control arbitration that let you guide behavior and intervene; tactile sensors, vision failovers, and adaptive speed scaling reduce collision risk during task handovers and shifting human intent.

Conclusion

Presently you should design modular control systems, adaptive learning pipelines, and clear safety constraints so robots learn new tasks with minimal supervision while you monitor performance and update policies to ensure reliable, transparent operation.