Just define sensor choices, SLAM algorithms, chassis, and power requirements so you can design and build an autonomous mapping robot that produces accurate maps, maintains localization, and operates safely during field testing.

Hardware Architecture and Component Selection

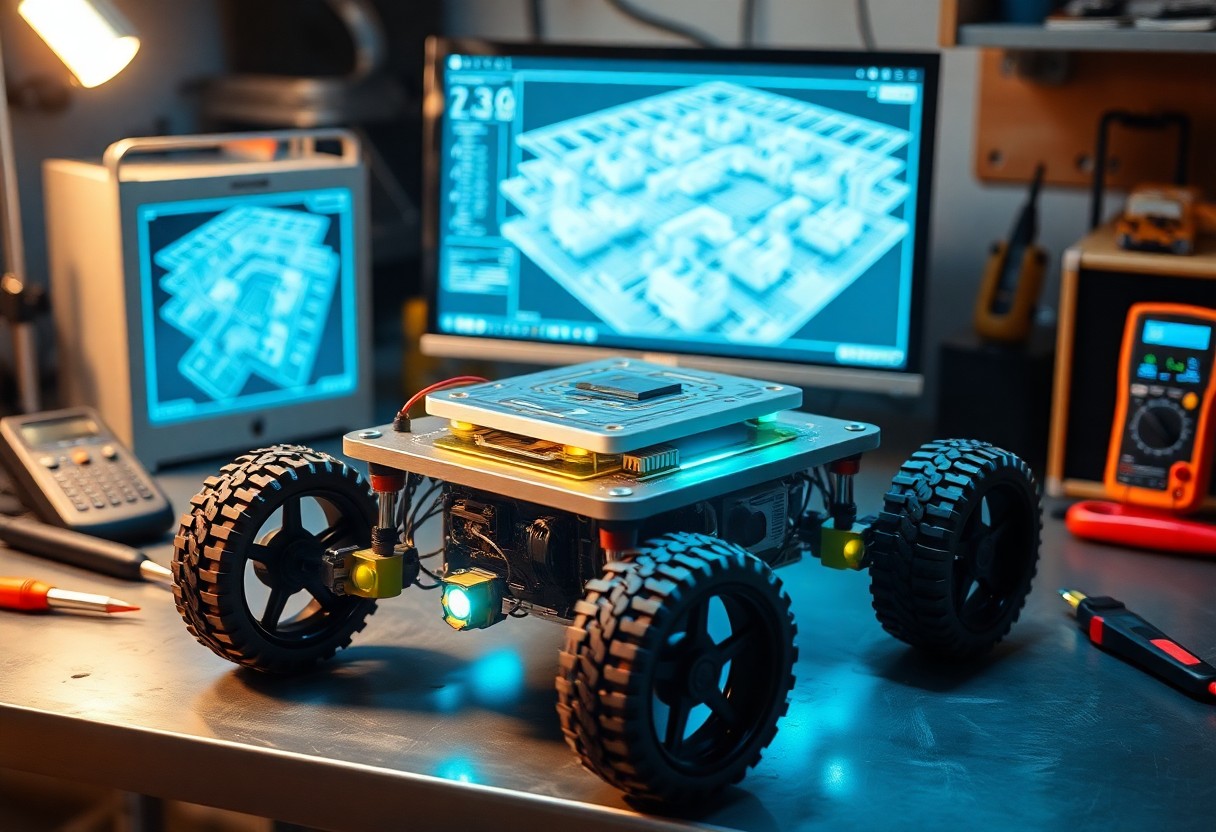

Your hardware design balances processing, power, and payload constraints; choose a modular chassis, scalable compute (embedded GPU or SBC), high-efficiency power distribution, and accessible connectors so you can swap sensors and upgrade compute without reworking the platform.

Mobile Platform and Drive Train Specifications

Select a mobile base that matches terrain and speed: differential or omnidirectional drive, motor torques sized for payload, encoders for odometry, and suspension options if needed, so you can maintain stability and mapping accuracy.

Sensor Suite Integration for Spatial Perception

Integrate lidar for high-fidelity range, stereo or depth cameras for texture and visual odometry, IMU for pose stability, and optional radar for poor-visibility conditions so you can build dense, reliable maps.

Calibration and time-synchronization across lidar, cameras, and IMU are required; you should tightly couple sensor fusion (EKF, VIO, or factor-graph SLAM), run extrinsic and intrinsic calibration routines, handle occlusions and overlapping fields of view, and prioritize bandwidth and CPU/GPU scheduling so mapping remains accurate at your target frame rates.

Embedded Systems and Control Theory

Embedded microcontrollers coordinate sensors, timing, and control loops so you maintain deterministic behavior for mapping; you configure interrupts, task priorities, and signal conditioning to meet real-time constraints.

Microcontroller Interfacing and Firmware Development

Peripherals require precise pin mapping, level shifting, and interrupt schemes; you write firmware that abstracts drivers, manages DMA transfers, and implements safe boot and OTA update paths to keep mapping reliable.

Motor Control Loops and Odometry Feedback

Encoders provide velocity and displacement data, so you tune PID loops to translate setpoints into smooth wheel motion while fusing odometry with sensor estimates to maintain pose accuracy.

Tuning PID gains and adding feedforward terms lets you respond to inertia and friction with minimal overshoot; you model motor dynamics, estimate time constants, and include anti-windup. You correct cumulative encoder drift by fusing IMU and range-sensor updates in an EKF or complementary filter, and you calibrate wheel radius, track width, and encoder resolution to reduce systematic odometry bias. You implement safety limits, velocity filters, and periodic recalibration routines so your mapping pose remains stable during long missions.

Simultaneous Localization and Mapping (SLAM) Frameworks

SLAM frameworks combine sensor fusion, pose estimation, and map management so you can balance accuracy, latency, and compute for your robot. Consult a hands-on project Building a 3D-printed robot that uses SLAM for … for implementation pointers.

Evaluation of Lidar-based vs. Visual SLAM Algorithms

Lidar systems offer precise range data for sparse scenes, while visual SLAM provides richer features in textured environments; you should weigh sensor cost, lighting sensitivity, and processing load when selecting the best fit for your mapping goals.

Scan Matching and Loop Closure Optimization

Scan matching aligns successive scans for local pose estimates, while loop closure detects revisits to reduce drift; you must tune matching thresholds and pose graph solvers to maintain global consistency.

Fine-tuning scan matching requires choosing between ICP variants, NDT, or feature-based correspondences based on sensor noise and scene geometry; you should implement effective outlier rejection and adaptive thresholds for stable, real-time performance. Pose graph optimization using solvers like g2o or Ceres then distributes corrections from detected loops, so you must monitor solver convergence and computational load to prevent runtime stalls.

Navigation Stack and Path Planning

You tune the navigation stack to combine global planners with local controllers, aligning planner goals, cost functions, and controller frequencies to your robot’s sensors and motion constraints so mapping and traversal remain consistent during autonomous exploration.

Global and Local Costmap Configuration

Configure global and local costmaps to match sensor footprints, setting resolution, inflation, and update rates while defining static obstacles and dynamic layering so you maintain accurate occupancy grids for both long-range planning and reactive control.

Dynamic Obstacle Avoidance and Trajectory Generation

Plan trajectories that predict moving obstacles, scoring candidates on safety, smoothness, and feasibility while respecting your robot’s kinematic and acceleration limits and enabling frequent replanning for timely responses.

When dynamic avoidance requires higher fidelity, you integrate sensor fusion, short-term motion prediction, and controllers like MPC or DWA; tune cost weights, sampling density, prediction horizon, and safety buffers, and run the local planner at high frequency so you can replan around unpredictable pedestrians and moving obstacles without losing control.

Software Integration and Middleware

Integrating middleware and orchestration layers lets you connect sensing, mapping, and planning components while managing message flow, timing, and health monitoring across nodes. Keep interfaces stable, version packages, and test cross-platform behavior to preserve mapping accuracy and runtime predictability.

ROS/ROS2 Workspace Configuration

Configuring your ROS/ROS2 workspace requires clear package organization, consistent build tools like catkin or colcon, and precise dependency control so you can compile sensors, SLAM, and control stacks reproducibly across machines.

Communication Protocols and Data Serialization

Selecting appropriate protocols and serializers lets you balance latency, throughput, and reliability: use UDP for low-latency streaming, TCP or DDS for guaranteed delivery, and compact formats like Protobuf or CBOR for efficient serialization.

Evaluate transport QoS, schema evolution, endianness, message framing, and CPU cost so you can choose encryption, compression, or middleware routing that preserves timestamp fidelity and minimizes packet loss during mapping runs.

System Calibration and Field Testing

Calibration ensures you align sensors, actuators, and controllers before outdoor trials; field tests validate mapping pipelines under real-world motion, lighting, and signal interference to refine parameters.

Sensor Fusion Tuning and Error Mitigation

Tuning sensor fusion gains and time offsets helps you minimize drift and reduce false correlations; run repeated trajectories and adjust covariances to balance responsiveness and stability.

Benchmarking Mapping Accuracy in Complex Environments

Assessing mapping accuracy requires ground-truth comparisons and metrics like ATE, RMSE, and coverage; you should test in cluttered corridors, multi-floor areas, and dynamic scenes to expose edge cases.

When benchmarking, you should define standardized routes with varied geometry, produce high-resolution ground truth via total station, motion capture, or pre-surveyed maps, and run multiple trials to compute ATE, RPE, RMSE, and IoU for occupancy grids with confidence intervals. Include sensor dropout scenarios, dynamic obstacles, and lighting changes to evaluate reliability, and apply statistical tests to compare algorithm versions and parameter sets.

Final Words

Now you will select appropriate sensors, implement mapping algorithms, and perform rigorous testing so your autonomous mapping robot achieves reliable performance and safety; validate in varied environments, analyze failures, and refine your design with clear documentation for operational deployment.