Over this concise guide, you will learn sensor selection, control design, perception integration, and testing methods to build an autonomous robot that reliably maps and avoids obstacles.

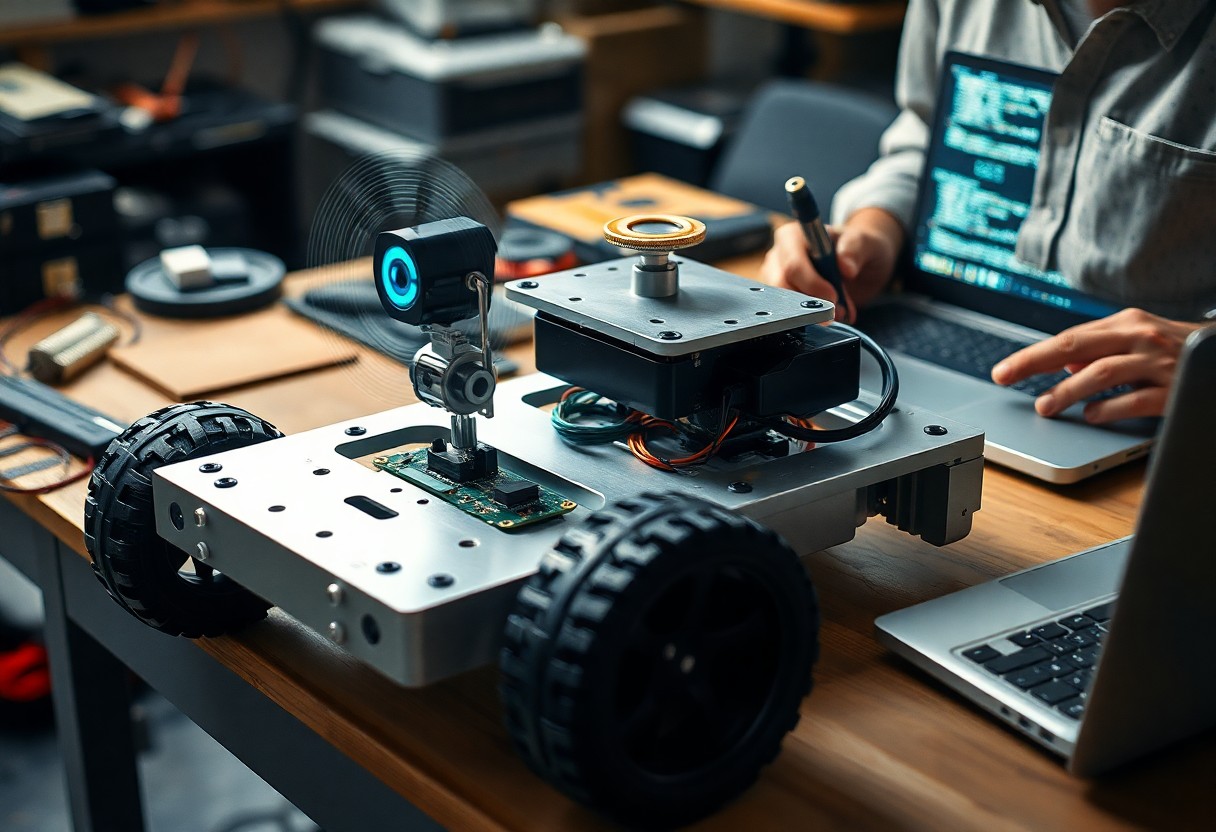

Hardware Selection and Mechanical Design

Select components that match sensor payload, computation, and mounting constraints so you can swap parts during testing and iterate quickly.

Chassis Configuration and Drive Systems

Chassis selection influences stability and sensor placement; you should choose wheelbase, ground clearance, and material to match expected surfaces and payload.

Power Management and Actuator Control

Balance battery capacity, voltage rails, and actuator demands so you can meet runtime goals without excessive mass or heat.

You must design power distribution to isolate noise-sensitive sensors, include voltage regulation for actuators, and size wiring to handle stall currents. Plan battery management with cell balancing, temperature monitoring, and fail-safes, and implement current sensing plus firmware protections so you can prevent thermal or mechanical failures during extended missions.

Sensor Integration for Environmental Perception

Sensors let you combine range, vision, and inertial data to build a coherent map of surroundings for path planning and obstacle avoidance.

Lidar and Ultrasonic Rangefinding

Lidar and ultrasonic sensors give you precise distance measurements for obstacle detection; lidar provides high-resolution scans while ultrasonic handles simple, low-cost short-range checks.

Computer Vision and Depth Sensing

Computer vision and depth sensing enable you to detect objects, estimate distances, and recognize features using cameras, stereo rigs, and depth cameras for semantic mapping and localization.

Depth sensors like stereo rigs, structured light, and time-of-flight cameras produce dense point clouds; you should fuse them with IMU and lidar, apply filtering and confidence weighting, and use learning-based models to handle dynamic objects and occlusions.

Computing Platforms and Software Architecture

You must choose computing platforms that balance processing power, energy draw, and real-time needs to run perception, control, and path planning modules; software architecture should partition tasks into modular processes so you can test and update components independently.

Embedded Controllers and Single-Board Computers

Microcontrollers handle low-latency motor control while single-board computers run sensor fusion and high-level planning; you should pair them with clear communication interfaces and careful power budgeting to ensure predictable behavior in the field.

Implementing the Robot Operating System (ROS) Framework

ROS provides messaging, device drivers, and community packages so you can assemble perception, mapping, and control as interoperable nodes; you should design namespaces and topics to minimize bus congestion and simplify debugging.

Configuring ROS for autonomy means structuring node graphs, defining QoS for sensors and actuators, and isolating time-critical loops in real-time-safe components; you will use launch files, parameter files, and namespaces to manage complexity, choose ROS2 for DDS-based reliability or ROS1 for legacy support, and integrate simulation, logging, and CI to validate behavior before field deployment.

Localization and Mapping Strategies

Mapping approaches combine multi-sensor fusion, probabilistic filters, and loop-closure methods so you can maintain consistent local and global pose estimates while exploring unknown environments.

Simultaneous Localization and Mapping (SLAM)

SLAM algorithms enable you to build and refine maps while estimating pose, balancing feature extraction, data association, and real-time constraints to support long-term autonomy in dynamic settings.

Odometry and Inertial Measurement Fusion

Odometry fused with IMU measurements helps you limit drift between landmark updates, providing continuous pose tracking during brief sensor occlusions or rapid maneuvers.

Combining odometry and IMU through an EKF or complementary filter lets you correct biases, smooth velocity spikes, and recover orientation during wheel slip, improving short-term accuracy until visual or lidar updates restore absolute reference.

Path Planning and Motion Control

You should design planners that balance global route selection with real-time control, tuning motion controllers for smooth turns and speed profiles; consult 12. Autonomous Navigation – How to Make a Robot for practical examples and algorithms to implement path planning and closed-loop motion control.

Global and Local Trajectory Generation

Global planners provide you with coarse routes, while local trajectory generators refine feasible, smooth paths that respect your robot’s kinematics and dynamics, enabling reliable execution in changing environments.

Obstacle Avoidance and Reactive Navigation

Reactive controllers let you respond instantly to obstacles using sensor fusion, potential fields, or dynamic window approaches, blending with higher-level planners to keep motion safe and on target.

Sensors should feed a fused obstacle map so you can classify static versus dynamic hazards; apply occupancy grids, velocity-obstacle methods, or predictive tracking to forecast object motion and plan avoidance maneuvers. You must tune safety margins, reaction thresholds, and recovery behaviors, integrate local planners with global goals, and test extensively in simulation and real-world scenarios to prevent oscillation and unsafe behavior.

System Integration and Validation

You combine hardware and software modules, verify interfaces, and run integration tests to confirm sensors, controllers, and communications operate together under expected conditions before wider trials.

Simulation Environments and Digital Twins

Simulations let you exercise algorithms, tune parameters, and reveal integration issues using digital twins that emulate sensor noise, actuators, and environmental dynamics for safer iteration.

Field Testing and Performance Metrics

Testing validates real-world behavior while you measure reliability, latency, path efficiency, and failure modes against defined acceptance thresholds and safety limits.

During extended trials you should execute representative routes and stress edge cases while collecting synchronized logs for localization error, mapping consistency, obstacle-avoidance success, latency, and energy use; you then apply statistical analysis, confidence intervals, and failure-rate metrics to prioritize fixes and refine operational thresholds before deployment.

To wrap up

Following this you should integrate sensors, SLAM algorithms, and precise motion control so the robot perceives and plans its path; you must validate behavior through staged trials and metric-driven tuning to ensure consistent autonomous operation in varied conditions.