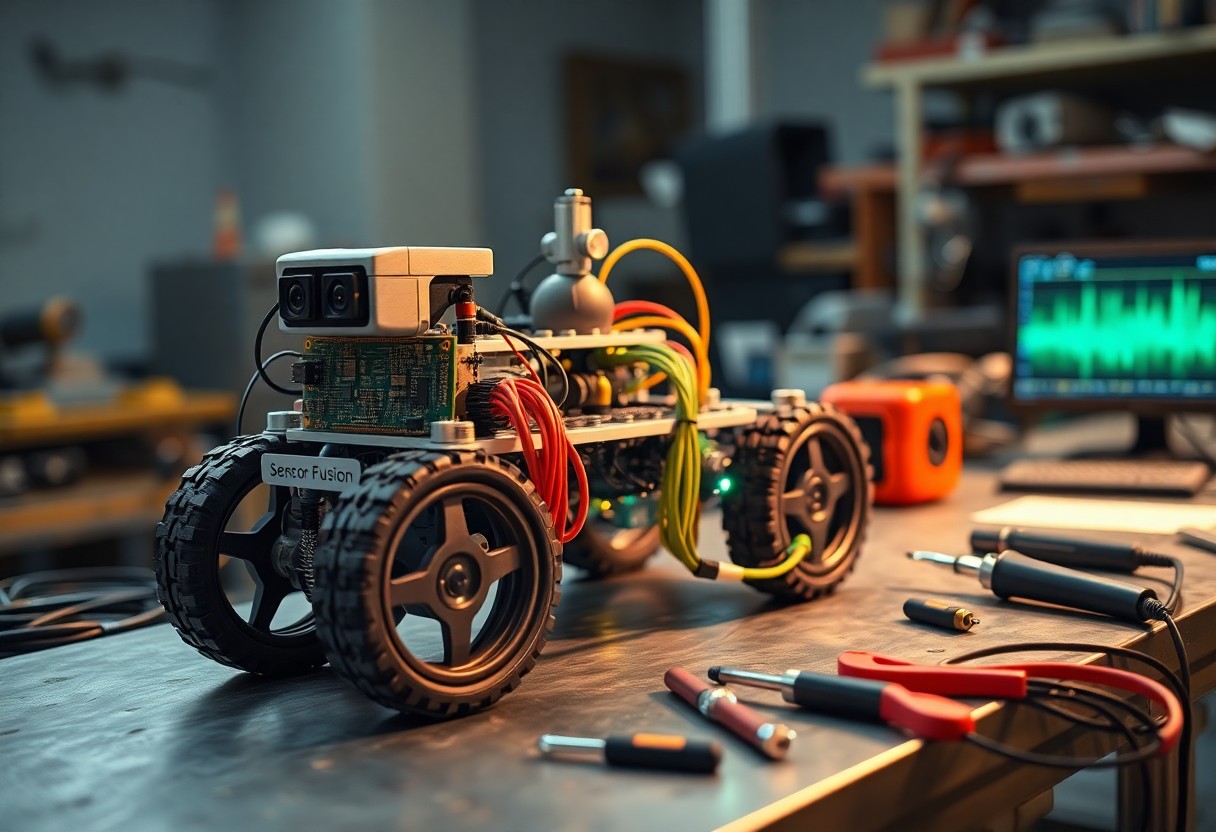

Just assemble sensors, microcontroller, and fusion algorithms to build a robot that synthesizes IMU, lidar, and vision data; you will learn hardware selection, calibration, sensor synchronization, and data fusion techniques to achieve reliable perception and control.

Hardware Architecture and Sensor Selection

Hardware choices define bus topology, power distribution, and compute placement, so you balance bandwidth, latency, and mechanical constraints when picking sensors and processors.

Primary Kinematic Sensors: IMUs and Encoders

IMUs provide angular rates and accelerations while encoders deliver wheel or joint positions; you fuse these for dead-reckoning and closed-loop control, prioritizing sampling rate and calibration accuracy.

Environmental Perception: LiDAR and Depth Cameras

LiDAR and depth cameras offer complementary range and dense geometry; you combine them for obstacle detection, mapping, and semantically informed path planning, accounting for sensor noise and frame alignment.

Sensor fusion pipelines pair LiDAR’s precise ranging with depth cameras’ dense per-pixel measurements to improve segmentation and classification; you must handle time synchronization, extrinsic calibration, differing noise models, occlusion-aware merging, and adaptive weighting based on reflectivity, ambient light, and expected object sizes to maintain coherent, actionable point clouds.

Mathematical Foundations of Sensor Fusion

You use linear algebra, probability, and optimization to fuse heterogeneous sensor data into consistent state estimates while aligning observability and stability analyses with system objectives.

Stochastic Modeling and Noise Characterization

Modeling sensor noise statistically lets you characterize biases, correlations, and time-varying variance, fit process and measurement covariances, and propagate uncertainty through estimators.

Implementation of Extended Kalman Filters (EKF)

Implementing an Extended Kalman Filter asks you to linearize nonlinear dynamics and measurements, compute Jacobians, propagate covariance, and apply consistent predict-update cycles for accurate state estimation.

Careful tuning and implementation reduce divergence: in prediction propagate x̂ = f(x̂,u) and P = FPF^T + Q, compute K = P H^T (H P H^T + R)^{-1}, update x̂ = x̂ + K(z – h(x̂)), and use the Joseph form to preserve P symmetry. You should derive analytic Jacobians when possible, perform NIS/NEES consistency tests, gate outliers, and consider square‑root or information filters for numerical stability on constrained hardware.

Software Integration and Data Processing

Integration centralizes sensor streams, time alignment, calibration, and preprocessing so you can fuse data reliably while minimizing latency and errors.

Real-Time Operating Systems and ROS Framework

RTOS and ROS work together to give you deterministic task scheduling, prioritized communications, hardware abstraction, and middleware tools for deploying real-time sensor fusion nodes.

Multi-Threaded Data Acquisition and Buffering

Threads should isolate sensor drivers, manage fixed-size buffers, and timestamp samples so you can maintain throughput and avoid packet loss under load.

Careful design of lock-free queues, priority-inheritance strategies, and efficient cross-thread synchronization lets you bound end-to-end latency, handle bursty inputs, and ensure consistent fusion input quality.

Temporal Synchronization and Spatial Calibration

Temporal synchronization and spatial calibration align timestamps and coordinate frames so you can fuse multi-sensor data accurately; you correct clock offsets, compensate latencies, and register sensor poses for consistent spatiotemporal inputs.

Precision Time Protocol (PTP) and Timestamping

PTP synchronizes device clocks across Ethernet so you can timestamp measurements precisely; you should evaluate hardware timestamping, network delays, and fallback strategies to maintain sub-microsecond alignment for fusion pipelines.

Extrinsic and Intrinsic Sensor Calibration

Calibration determines sensor intrinsics and extrinsics so you can map measurements into a common frame; you perform camera intrinsics, IMU scale and bias estimation, and rigid-body pose optimization to reduce systematic errors before fusion.

You should use target-based and targetless routines: perform camera calibration with checkerboards, run LiDAR-camera extrinsic estimation via mutual observations or hand-eye calibration, and refine intrinsics and extrinsics jointly with bundle adjustment while modeling IMU biases and time offsets for minimal reprojection and motion errors.

System Validation and Error Analysis

Validation focuses on quantifying sensor errors and system drift so you can prioritize fixes; run statistical tests, propagate uncertainties, and log discrepancies across scenarios to guide calibration and algorithm updates.

Benchmarking Fusion Accuracy Against Ground Truth

Compare fused outputs to ground truth trajectories and timestamps so you can compute RMSE, alignment errors, and confidence intervals that reveal bias, latency, and scale mismatches.

Stress Testing in Dynamic Environments

Stress test the fusion pipeline under sensor dropouts, occlusions, and high dynamics so you can quantify failure modes, recovery time, and degradation thresholds for safe operation.

During stress tests you should script sensor faults, time skew, and aggressive motion profiles so you can observe estimator divergence and controller responses. Set up hardware-in-the-loop and replay logs to reproduce failures, inject noise and latency to measure graceful degradation, and record metrics like convergence time, overshoot, and false-positive rates. Establish pass/fail criteria, automated alerts, and recovery strategies to harden deployment.

Summing up

Presently you finalize system architecture, select complementary sensors, implement time synchronization and sensor fusion algorithms, and calibrate continuously to reduce drift. You validate performance through scenarios and iterate firmware and mechanical integration to ensure reliable perception and control for your robot.