Just use sensor fusion, SLAM-based mapping, precise localization, path planning, and closed-loop control so your robot follows safe routes, avoids obstacles, and adapts to changing environments.

Sensor Integration and Perception

Sensors must be harmonized so you can interpret conflicting streams, aligning timestamps, compensating for drift, and prioritizing data quality to keep perception reliable in varied operational conditions.

Multi-modal Data Fusion (Lidar, IMU, and Vision)

Fusion algorithms combine Lidar, IMU, and vision so you resolve occlusions, maintain pose accuracy, and smooth measurement noise across modalities using probabilistic filters or learned models.

Environmental Modeling and Feature Extraction

Models extract salient features so you can build occupancy grids, semantic maps, and traversability cues that inform control and path planning in cluttered or dynamic settings.

Detailed environmental models integrate geometry, semantics, and temporal filtering so you can maintain a coherent world representation under sensor noise and motion. You use TSDFs or voxel grids for dense geometry, occupancy grids and costmaps for planning, and CNN-based segmentation for semantic labeling; feature descriptors, clustering, and data association provide durable landmarks for localization. You must propagate uncertainty, handle dynamic objects explicitly, and compress map representations to meet real-time constraints on embedded hardware.

Localization and Mapping Strategies

You refine localization by fusing sensor data and map priors; consult Safety-aware autonomous robot navigation, mapping and … for safety-focused methods.

Simultaneous Localization and Mapping (SLAM

Your SLAM setup should fuse vision and lidar to build and update maps while you track pose estimates in real time.

Global Positioning and Odometry Correction

Combine GNSS fixes with wheel odometry and IMU to correct drift and keep global poses aligned during long runs.

Fusing GNSS with odometry requires careful timestamping, bias estimation, and failure detection; you should implement adaptive filtering, outlier rejection, and map-based corrections to maintain meter-scale accuracy in obstructed environments.

Path Planning and Navigation Algorithms

Algorithms determine how you generate feasible paths, balance optimality with computation, and fuse sensor data into map representations to support reliable motion planning.

Global Pathfinding and Graph-Based Search

Graph-based search lets you compute global routes using A*, Dijkstra, or probabilistic roadmaps on occupancy grids or topological graphs, optimizing cost, clearance, and constraints.

Dynamic Obstacle Avoidance and Reactive Planning

Reactive methods help you handle moving agents by combining velocity obstacles, potential fields, or model predictive control for fast trajectory adjustments and collision prevention.

Hybrid systems enable you to pair long-horizon planners with short-horizon reactive layers, incorporate prediction and tracking of others’ intents, and constrain controllers with safety envelopes so the robot adapts smoothly under sensor latency and dynamic constraints; extensive simulation and edge-case testing validate behavior in crowded or unpredictable settings.

Control Systems and Motion Execution

Control systems translate planned paths into actuator commands so you maintain desired pose and speed while compensating for disturbances and sensor noise.

Kinematic and Dynamic Modeling

Modeling kinematics and dynamics helps you predict motion, set realistic control limits, and tune controllers based on inertia, friction, and actuator limits.

Feedback Loops and Model Predictive Control (MPC)

Feedback loops give you continuous error correction, while MPC optimizes future inputs to respect constraints and anticipate disturbances for smoother, safer trajectories.

Implementing MPC requires predictive models, cost functions, constraint handling, and real-time solvers so you can plan control sequences that respect actuator limits and anticipate obstacles. You should balance horizon length, model fidelity, and computation to achieve feasible rates on your hardware, and integrate feedback to correct model mismatch and sensor drift.

Artificial Intelligence in Autonomous Decision Making

AI enables you to fuse sensor inputs, predict outcomes, and make split-second decisions, combining probabilistic models and learned policies so your robot handles uncertainty, prioritizes safety, and achieves mission goals under changing operational conditions.

Deep Learning for Object Recognition

Convolutional networks let you detect objects from camera feeds and depth maps, support real-time classification and bounding-box regression, and improve with transfer learning and augmentation so your system maintains reliable perception across clutter and varying illumination.

Reinforcement Learning for Adaptive Navigation

Policy-gradient and Q-learning approaches enable you to acquire adaptive path strategies from trial experience, handling dynamic obstacles and shifting objectives while enforcing safety constraints through constrained rewards or hierarchical controllers.

You can accelerate training with high-fidelity simulation and domain randomization, then apply sim-to-real techniques and fine-tuning on hardware; prioritizing sample-efficient algorithms, combining model-based planning with model-free policies, and using imitation or offline data reduces risky exploration while reward shaping and curriculum schedules improve convergence and deployment safety.

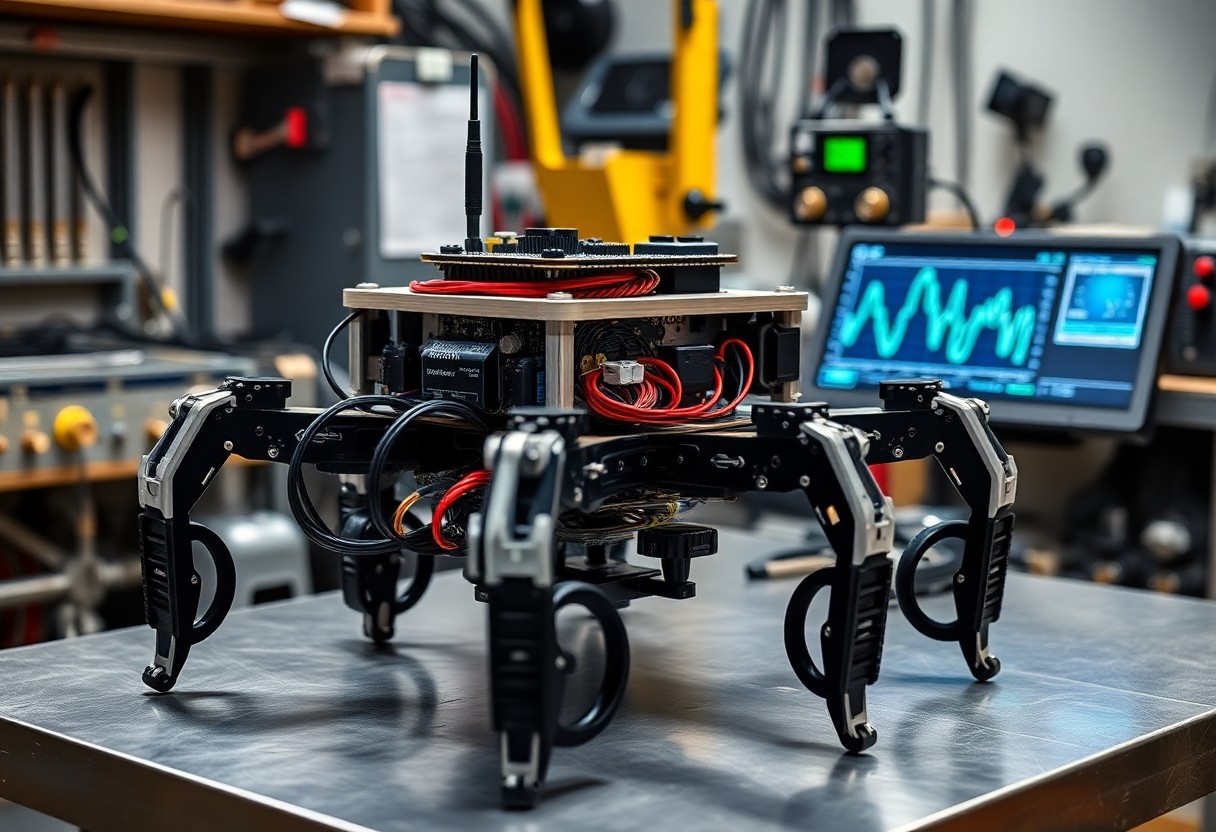

System Architecture and Integration

Architecture ties sensors, actuators, compute and power into coherent workflows you design, ensuring clear data paths, fault isolation, and upgradeability without reworking core systems.

Robot Operating System (ROS) Frameworks

ROS gives you modular middleware and tooling to compose nodes, manage messages, and run simulations while you refine behaviors and testing pipelines.

Real-time Processing and Latency Management

Latency constraints force you to prioritize deterministic scheduling, prioritized message paths, and hardware offload so control loops meet millisecond deadlines.

Determinism in processing ensures you can bound control-loop latency by using real-time kernels, dedicated MCUs, or FPGA offload for tight feedback loops. You must instrument pipelines with high-resolution timestamps, PTP synchronization, and network QoS to isolate jitter sources. Profiling with hardware counters and latency histograms lets you prioritize tasks, tune interrupt affinity, and choose between polling and interrupt-driven I/O. Testing under worst-case loads and fault injection verifies deadline adherence before field trials.

To wrap up

Presently you must integrate precise sensing, model-based and learning controllers, and safety constraints so you can achieve reliable path planning, collision avoidance, and stable motion control while validating performance through simulation and field tests.